Adding Additional Content Types to my Classic ASP and URL Rewrite Samples for Dynamic SEO Functionality

28 February 2014 • by Bob • URL Rewrite, SEO, Classic ASP

In December of 2012 I wrote a blog titled "Using Classic ASP and URL Rewrite for Dynamic SEO Functionality", in which I described how to use Classic ASP and URL Rewrite to dynamically-generate Robots.txt and Sitemap.xml or Sitemap.txt files. I received a bunch of requests for additional information, so I wrote a follow-up blog this past November titled "Revisiting My Classic ASP and URL Rewrite for Dynamic SEO Functionality Examples" which illustrated how to limit the output for the Robots.asp and Sitemap.asp files to specific sections of your website.

That being said, I continue to receive requests for additional ways to stretch those samples, so I thought that I would write at least a couple of blogs on the subject. With that in mind, for today I wanted to show how you can add additional content types to the samples.

Overview

Here is a common question that I have been asked by several people:

"The example only works with *.html files; how do I include my other files?"

That's a great question, and additional content types are really easy to implement, and the majority of the code from my original blog will remain unchanged. Here's the file by file breakdown for the changes that need made:

| Filename | Changes |

|---|---|

| Robots.asp | None |

| Sitemap.asp | See the sample later in this blog |

| Web.config | None |

If you are already using the files from my original blog, no changes need to be made to your Robots.asp file or the URL Rewrite rules in your Web.config file because the updates in this blog will only impact the output from the Sitemap.asp file.

Updating the Sitemap.asp File

My original sample contained a line of code which read "If StrComp(strExt,"html",vbTextCompare)=0 Then" and this line was used to restrict the sitemap output to static *.html files. For this new sample I need to make two changes:

- I am adding a string constant which contains a list of file extensions from several content types to use for the output.

- I replace the line of code which performs the comparison.

Note: I define the constant near the beginning of the file so it's easier for other people to find; I would normally define that constant elsewhere in the code.

<%

Option Explicit

On Error Resume Next

Const strContentTypes = "htm|html|asp|aspx|txt"

Response.Clear

Response.Buffer = True

Response.AddHeader "Connection", "Keep-Alive"

Response.CacheControl = "public"

Dim strFolderArray, lngFolderArray

Dim strUrlRoot, strPhysicalRoot, strFormat

Dim strUrlRelative, strExt

Dim objFSO, objFolder, objFile

strPhysicalRoot = Server.MapPath("/")

Set objFSO = Server.CreateObject("Scripting.Filesystemobject")

strUrlRoot = "http://" & Request.ServerVariables("HTTP_HOST")

' Check for XML or TXT format.

If UCase(Trim(Request("format")))="XML" Then

strFormat = "XML"

Response.ContentType = "text/xml"

Else

strFormat = "TXT"

Response.ContentType = "text/plain"

End If

' Add the UTF-8 Byte Order Mark.

Response.Write Chr(CByte("&hEF"))

Response.Write Chr(CByte("&hBB"))

Response.Write Chr(CByte("&hBF"))

If strFormat = "XML" Then

Response.Write "<?xml version=""1.0"" encoding=""UTF-8""?>" & vbCrLf

Response.Write "<urlset xmlns=""http://www.sitemaps.org/schemas/sitemap/0.9"">" & vbCrLf

End if

' Always output the root of the website.

Call WriteUrl(strUrlRoot,Now,"weekly",strFormat)

' --------------------------------------------------

' This following section contains the logic to parse

' the directory tree and return URLs based on the

' files that it locates.

' --------------------------------------------------

strFolderArray = GetFolderTree(strPhysicalRoot)

For lngFolderArray = 1 to UBound(strFolderArray)

strUrlRelative = Replace(Mid(strFolderArray(lngFolderArray),Len(strPhysicalRoot)+1),"\","/")

Set objFolder = objFSO.GetFolder(Server.MapPath("." & strUrlRelative))

For Each objFile in objFolder.Files

strExt = objFSO.GetExtensionName(objFile.Name)

If InStr(1,strContentTypes,strExt,vbTextCompare) Then

If StrComp(Left(objFile.Name,6),"google",vbTextCompare)<>0 Then

Call WriteUrl(strUrlRoot & strUrlRelative & "/" & objFile.Name, objFile.DateLastModified, "weekly", strFormat)

End If

End If

Next

Next

' --------------------------------------------------

' End of file system loop.

' --------------------------------------------------

If strFormat = "XML" Then

Response.Write "</urlset>"

End If

Response.End

' ======================================================================

'

' Outputs a sitemap URL to the client in XML or TXT format.

'

' tmpStrFreq = always|hourly|daily|weekly|monthly|yearly|never

' tmpStrFormat = TXT|XML

'

' ======================================================================

Sub WriteUrl(tmpStrUrl,tmpLastModified,tmpStrFreq,tmpStrFormat)

On Error Resume Next

Dim tmpDate : tmpDate = CDate(tmpLastModified)

' Check if the request is for XML or TXT and return the appropriate syntax.

If tmpStrFormat = "XML" Then

Response.Write " <url>" & vbCrLf

Response.Write " <loc>" & Server.HtmlEncode(tmpStrUrl) & "</loc>" & vbCrLf

Response.Write " <lastmod>" & Year(tmpLastModified) & "-" & Right("0" & Month(tmpLastModified),2) & "-" & Right("0" & Day(tmpLastModified),2) & "</lastmod>" & vbCrLf

Response.Write " <changefreq>" & tmpStrFreq & "</changefreq>" & vbCrLf

Response.Write " </url>" & vbCrLf

Else

Response.Write tmpStrUrl & vbCrLf

End If

End Sub

' ======================================================================

'

' Returns a string array of folders under a root path

'

' ======================================================================

Function GetFolderTree(strBaseFolder)

Dim tmpFolderCount,tmpBaseCount

Dim tmpFolders()

Dim tmpFSO,tmpFolder,tmpSubFolder

' Define the initial values for the folder counters.

tmpFolderCount = 1

tmpBaseCount = 0

' Dimension an array to hold the folder names.

ReDim tmpFolders(1)

' Store the root folder in the array.

tmpFolders(tmpFolderCount) = strBaseFolder

' Create file system object.

Set tmpFSO = Server.CreateObject("Scripting.Filesystemobject")

' Loop while we still have folders to process.

While tmpFolderCount <> tmpBaseCount

' Set up a folder object to a base folder.

Set tmpFolder = tmpFSO.GetFolder(tmpFolders(tmpBaseCount+1))

' Loop through the collection of subfolders for the base folder.

For Each tmpSubFolder In tmpFolder.SubFolders

' Increment the folder count.

tmpFolderCount = tmpFolderCount + 1

' Increase the array size

ReDim Preserve tmpFolders(tmpFolderCount)

' Store the folder name in the array.

tmpFolders(tmpFolderCount) = tmpSubFolder.Path

Next

' Increment the base folder counter.

tmpBaseCount = tmpBaseCount + 1

Wend

GetFolderTree = tmpFolders

End Function

%>

That's it. Pretty easy, eh?

I have also received several requests about creating a sitemap which contains URLs with query strings, but I'll cover that scenario in a later blog.

Note: This blog was originally posted at http://blogs.msdn.com/robert_mcmurray/

Revisiting My Classic ASP and URL Rewrite for Dynamic SEO Functionality Examples

20 November 2013 • by Bob • Classic ASP, IIS, SEO, URL Rewrite

Last year I wrote a blog titled Using Classic ASP and URL Rewrite for Dynamic SEO Functionality, in which I described how you could combine Classic ASP and the URL Rewrite module for IIS to dynamically create Robots.txt and Sitemap.xml files for your website, thereby helping with your Search Engine Optimization (SEO) results. A few weeks ago I had a follow-up question that I thought was worth answering in a blog post.

Overview

Here is the question that I was asked:

"What if I don't want to include all dynamic pages in sitemap.xml but only a select few or some in certain directories because I don't want bots to crawl all of them. What can I do?"

That's a great question, and it wasn't tremendously difficult for me to update my original code samples to address this request. First of all, the majority of the code from my last blog will remain unchanged - here's the file by file breakdown for the changes that need made:

| Filename | Changes |

|---|---|

| Robots.asp | None |

| Sitemap.asp | See the sample later in this blog |

| Web.config | None |

So if you are already using the files from my original blog, no changes need to be made to your Robot.asp file or the URL Rewrite rules in your Web.config file because the question only concerns the files that are returned in the the output for Sitemap.xml.

Updating the Necessary Files

The good news it, I wrote most of the heavy duty code in my last blog - there were only a few changes that needed to made in order to accommodate the requested functionality. The main difference is that the original Sitemap.asp file used to have a section that recursively parsed the entire website and listed all of the files in the website, whereas this new version moves that section of code into a separate function to which you pass the unique folder name to parse recursively. This allows you to specify only those folders within your website that you want in the resultant sitemap output.

With that being said, here's the new code for the Sitemap.asp file:

<%

Option Explicit

On Error Resume Next

Response.Clear

Response.Buffer = True

Response.AddHeader "Connection", "Keep-Alive"

Response.CacheControl = "public"

Dim strUrlRoot, strPhysicalRoot, strFormat

Dim objFSO, objFolder, objFile

strPhysicalRoot = Server.MapPath("/")

Set objFSO = Server.CreateObject("Scripting.Filesystemobject")

strUrlRoot = "http://" & Request.ServerVariables("HTTP_HOST")

' Check for XML or TXT format.

If UCase(Trim(Request("format")))="XML" Then

strFormat = "XML"

Response.ContentType = "text/xml"

Else

strFormat = "TXT"

Response.ContentType = "text/plain"

End If

' Add the UTF-8 Byte Order Mark.

Response.Write Chr(CByte("&hEF"))

Response.Write Chr(CByte("&hBB"))

Response.Write Chr(CByte("&hBF"))

If strFormat = "XML" Then

Response.Write "<?xml version=""1.0"" encoding=""UTF-8""?>" & vbCrLf

Response.Write "<urlset xmlns=""http://www.sitemaps.org/schemas/sitemap/0.9"">" & vbCrLf

End if

' Always output the root of the website.

Call WriteUrl(strUrlRoot,Now,"weekly",strFormat)

' Output only specific folders.

Call ParseFolder("/marketing")

Call ParseFolder("/sales")

Call ParseFolder("/hr/jobs")

' --------------------------------------------------

' End of file system loop.

' --------------------------------------------------

If strFormat = "XML" Then

Response.Write "</urlset>"

End If

Response.End

' ======================================================================

'

' Recursively walks a folder path and return URLs based on the

' static *.html files that it locates.

'

' strRootFolder = The base path for recursion

'

' ======================================================================

Sub ParseFolder(strParentFolder)

On Error Resume Next

Dim strChildFolders, lngChildFolders

Dim strUrlRelative, strExt

' Get the list of child folders under a parent folder.

strChildFolders = GetFolderTree(Server.MapPath(strParentFolder))

' Loop through the collection of folders.

For lngChildFolders = 1 to UBound(strChildFolders)

strUrlRelative = Replace(Mid(strChildFolders(lngChildFolders),Len(strPhysicalRoot)+1),"\","/")

Set objFolder = objFSO.GetFolder(Server.MapPath("." & strUrlRelative))

' Loop through the collection of files.

For Each objFile in objFolder.Files

strExt = objFSO.GetExtensionName(objFile.Name)

If StrComp(strExt,"html",vbTextCompare)=0 Then

If StrComp(Left(objFile.Name,6),"google",vbTextCompare)<>0 Then

Call WriteUrl(strUrlRoot & strUrlRelative & "/" & objFile.Name, objFile.DateLastModified, "weekly", strFormat)

End If

End If

Next

Next

End Sub

' ======================================================================

'

' Outputs a sitemap URL to the client in XML or TXT format.

'

' tmpStrFreq = always|hourly|daily|weekly|monthly|yearly|never

' tmpStrFormat = TXT|XML

'

' ======================================================================

Sub WriteUrl(tmpStrUrl,tmpLastModified,tmpStrFreq,tmpStrFormat)

On Error Resume Next

Dim tmpDate : tmpDate = CDate(tmpLastModified)

' Check if the request is for XML or TXT and return the appropriate syntax.

If tmpStrFormat = "XML" Then

Response.Write " <url>" & vbCrLf

Response.Write " <loc>" & Server.HtmlEncode(tmpStrUrl) & "</loc>" & vbCrLf

Response.Write " <lastmod>" & Year(tmpLastModified) & "-" & Right("0" & Month(tmpLastModified),2) & "-" & Right("0" & Day(tmpLastModified),2) & "</lastmod>" & vbCrLf

Response.Write " <changefreq>" & tmpStrFreq & "</changefreq>" & vbCrLf

Response.Write " </url>" & vbCrLf

Else

Response.Write tmpStrUrl & vbCrLf

End If

End Sub

' ======================================================================

'

' Returns a string array of folders under a root path

'

' ======================================================================

Function GetFolderTree(strBaseFolder)

Dim tmpFolderCount,tmpBaseCount

Dim tmpFolders()

Dim tmpFSO,tmpFolder,tmpSubFolder

' Define the initial values for the folder counters.

tmpFolderCount = 1

tmpBaseCount = 0

' Dimension an array to hold the folder names.

ReDim tmpFolders(1)

' Store the root folder in the array.

tmpFolders(tmpFolderCount) = strBaseFolder

' Create file system object.

Set tmpFSO = Server.CreateObject("Scripting.Filesystemobject")

' Loop while we still have folders to process.

While tmpFolderCount <> tmpBaseCount

' Set up a folder object to a base folder.

Set tmpFolder = tmpFSO.GetFolder(tmpFolders(tmpBaseCount+1))

' Loop through the collection of subfolders for the base folder.

For Each tmpSubFolder In tmpFolder.SubFolders

' Increment the folder count.

tmpFolderCount = tmpFolderCount + 1

' Increase the array size

ReDim Preserve tmpFolders(tmpFolderCount)

' Store the folder name in the array.

tmpFolders(tmpFolderCount) = tmpSubFolder.Path

Next

' Increment the base folder counter.

tmpBaseCount = tmpBaseCount + 1

Wend

GetFolderTree = tmpFolders

End Function

%>

It should be easily seen that the code is largely unchanged from my previous blog.

In Closing...

One last thing to consider, I didn't make any changes to the Robots.asp file in this blog. But that being said, when you do not want specific paths crawled, you should add rules to your Robots.txt file to disallow those paths. For example, here is a simple Robots.txt file that allows your entire website:

# Robots.txt # For more information on this file see: # http://www.robotstxt.org/ # Define the sitemap path Sitemap: http://www.example.com/sitemap.xml # Make changes for all web spiders User-agent: * Allow: / Disallow:

If you were going to deny crawling on certain paths, you would need to add the specific paths that you do not want crawled to your Robots.txt file like the following example:

# Robots.txt # For more information on this file see: # http://www.robotstxt.org/ # Define the sitemap path Sitemap: http://www.example.com/sitemap.xml # Make changes for all web spiders User-agent: * Disallow: /foo Disallow: /bar

With that being said, if you are using my Robots.asp file from my last blog, you would need to update the section of code that defines the paths like my previous example:

Response.Write "# Make changes for all web spiders" & vbCrLf Response.Write "User-agent: *" & vbCrLf Response.Write "Disallow: /foo" & vbCrLf Response.Write "Disallow: /bar" & vbCrLf

I hope this helps. ;-]

Note: This blog was originally posted at http://blogs.msdn.com/robert_mcmurray/

How to Create a Blind Drop WebDAV Share

17 September 2013 • by Bob • IIS, WebDAV, URL Rewrite

I had an interesting WebDAV question earlier today that I had not considered before: how can someone create a "Blind Drop Share" using WebDAV? By way of explanation, a Blind Drop Share is a path where users can copy files, but never see the files that are in the directory. You can setup something like this by using NTFS permissions, but that environment can be a little difficult to set up and maintain.

With that in mind, I decided to research a WebDAV-specific solution that didn't require mangling my NTFS permissions. In the end it was pretty easy to achieve, so I thought that it would make a good blog for anyone who wants to do this.

A Little Bit About WebDAV

NTFS permissions contain access controls that configure the directory-listing behavior for files and folders; if you modify those settings, you can control who can see files and folders when they connect to your shared resources. However, there are no built-in features for the WebDAV module which ships with IIS that will approximate the NTFS behavior. But that being said, there is an interesting WebDAV quirk that you can use that will allow you to restrict directory listings, and I will explain how that works.

WebDAV uses the PROPFIND command to retrieve the properties for files and folders, and the WebDAV Redirector will use the response from a PROPFIND command to display a directory listing. (Note: Official WebDAV terminology has no concept of files and folders, those physical objects are respectively called Resources and Collections in WebDAV jargon. But that being said, I will use files and folders throughout this blog post for ease of understanding.)

In any event, one of the HTTP headers that WebDAV uses with the PROPFIND command is the Depth header, which is used to specify how deep the folder/collection traversal should go:

- If you sent a

PROPFINDcommand for the root of your website with aDepth:0header/value, you would get the properties for just the root directory - with no files listed; aDepth:0header/value only retrieves properties for the single resource that you requested. - If you sent a

PROPFINDcommand for the root of your website with aDepth:1header/value, you would get the properties for every file and folder in the root of your website; aDepth:1header/value retrieves properties for the resource that you requested and all siblings. - If you sent a

PROPFINDcommand for the root of your website with aDepth:infinityheader/value, you would get the properties for every file and folder in your entire website; aDepth:infinityheader/value retrieves properties for every resource regardless of its depth in the hierarchy. (Note that retrieving directory listings with infinite depth are disabled by default in IIS 7 and IIS 8 because it can be CPU intensive.)

By analyzing the above information, it should be obvious that what you need to do is to restrict users to using a Depth:0 header/value. But that's where this scenario gets interesting: if your end-users are using the Windows WebDAV Redirector or other similar technology to map a drive to your HTTP website, you have no control over the value of the Depth header. So how can you restrict that?

In the past I would have written custom native-code HTTP module or ISAPI filter to modify the value of the Depth header; but once you understand how WebDAV works, you can use the URL Rewrite module to modify the headers of incoming HTTP requests to accomplish some pretty cool things - like modifying the values WebDAV-related HTTP headers.

Adding URL Rewrite Rules to Modify the WebDAV Depth Header

Here's how I configured URL Rewrite to set the value of the Depth header to 0, which allowed me to create a "Blind Drop" WebDAV site:

- Open the URL Rewrite feature in IIS Manager for your website.

Click image to expand - Click the Add Rules link in the Actionspane.

Click image to expand - When the Add Rules dialog box appears, highlight Blank rule and click OK.

Click image to expand - When the Edit Inbound Rulepage appears, configure the following settings:

- Name the rule "Modify Depth Header".

Click image to expand - In the Match URLsection:

- Choose Matches the Pattern in the Requested URL drop-down menu.

- Choose Wildcards in the Using drop-down menu.

- Type a single asterisk "*" in the Pattern text box.

Click image to expand - Expand the Server Variables collection and click Add.

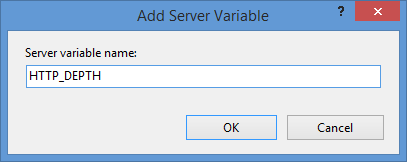

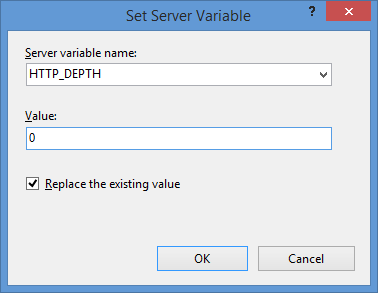

Click image to expand - When the Set Server Variabledialog box appears:

- Type "HTTP_DEPTH" in the Server variable name text box.

- Type "0" in the Value text box.

- Make sure that Replace the existing value checkbox is checked.

- Click OK.

- In the Action group, choose None in the Action typedrop-down menu.

Click image to expand - Click Apply in the Actions pane, and then click Back to Rules.

Click image to expand

- Name the rule "Modify Depth Header".

- Click View Server Variables in the Actionspane.

Click image to expand - When the Allowed Server Variablespage appears, configure the following settings:

If all of these changes were saved to your applicationHost.config file, the resulting XML might resemble the following example - with XML comments added by me to highlight some of the major sections:

<location path="Default Web Site"> <system.webServer> <-- Start of Security Settings --> <security> <authentication> <anonymousAuthentication enabled="false" /> <basicAuthentication enabled="true" /> </authentication> <requestFiltering> <fileExtensions applyToWebDAV="false" /> <verbs applyToWebDAV="false" /> <hiddenSegments applyToWebDAV="false" /> </requestFiltering> </security> <-- Start of WebDAV Settings --> <webdav> <authoringRules> <add roles="administrators" path="*" access="Read, Write, Source" /> </authoringRules> <authoring enabled="true"> <properties allowAnonymousPropfind="false" allowInfinitePropfindDepth="true"> <clear /> <add xmlNamespace="*" propertyStore="webdav_simple_prop" /> </properties> </authoring> </webdav> <-- Start of URL Rewrite Settings --> <rewrite> <rules> <rule name="Modify Depth Header" enabled="true" patternSyntax="Wildcard"> <match url="*" /> <serverVariables> <set name="HTTP_DEPTH" value="0" /> </serverVariables> <action type="None" /> </rule> </rules> <allowedServerVariables> <add name="HTTP_DEPTH" /> </allowedServerVariables> </rewrite> </system.webServer> </location>

In all likelihood, some of these settings will be stored in your applicationHost.config file, and the remaining settings will be stored in the web.config file of your website.

Testing the URL Rewrite Settings

If you did not have the URL Rewrite rule in place, or if you disabled the rule, then your web server might respond like the following example if you used the WebDAV Redirector to map a drive to your website from a command prompt:

C:\>net use z: http://www.contoso.com/

Enter the user name for 'www.contoso.com': www.contoso.com\robert

Enter the password for www.contoso.com:

The command completed successfully.

C:\>z:

Z:\>dir

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\

09/16/2013 08:55 PM <DIR> .

09/16/2013 08:55 PM <DIR> ..

09/14/2013 12:39 AM <DIR> aspnet_client

09/16/2013 08:06 PM <DIR> scripts

09/16/2013 07:55 PM 66 default.aspx

09/14/2013 12:38 AM 98,757 iis-85.png

09/14/2013 12:38 AM 694 iisstart.htm

09/16/2013 08:55 PM 75 web.config

4 File(s) 99,592 bytes

8 Dir(s) 956,202,631,168 bytes free

Z:\>

However, when you have the URL Rewrite correctly configured and enabled, connecting to the same website will resemble the following example - notice how no files or folders are listed:

C:\>net use z: http://www.contoso.com/

Enter the user name for 'www.contoso.com': www.contoso.com\robert

Enter the password for www.contoso.com:

The command completed successfully.

C:\>z:

Z:\>dir

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\

09/16/2013 08:55 PM <DIR> .

09/16/2013 08:55 PM <DIR> ..

0 File(s) 0 bytes

2 Dir(s) 956,202,803,200 bytes free

Z:\>

Despite the blank directory listing, you can still retrieve the properties for any file or folder if you know that it exists. So if you were to use the mapped drive from the preceding example, you could still use an explicit directory command for any object that you had uploaded or created:

Z:\>dir default.aspx

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\

09/16/2013 07:55 PM 66 default.aspx

1 File(s) 66 bytes

0 Dir(s) 956,202,799,104 bytes free

Z:\>dir scripts

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\scripts

09/16/2013 07:52 PM <DIR> .

09/16/2013 07:52 PM <DIR> ..

0 File(s) 0 bytes

2 Dir(s) 956,202,799,104 bytes free

Z:\>

The same is true for creating directories and files; you can create them, but they will not show up in the directory listings after you have created them unless you reference them explicitly:

Z:\>md foobar

Z:\>dir

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\

09/16/2013 11:52 PM <DIR> .

09/16/2013 11:52 PM <DIR> ..

0 File(s) 0 bytes

2 Dir(s) 956,202,618,880 bytes free

Z:\>cd foobar

Z:\foobar>copy NUL foobar.txt

1 file(s) copied.

Z:\foobar>dir

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\foobar

09/16/2013 11:52 PM <DIR> .

09/16/2013 11:52 PM <DIR> ..

0 File(s) 0 bytes

2 Dir(s) 956,202,303,488 bytes free

Z:\foobar>dir foobar.txt

Volume in drive Z has no label.

Volume Serial Number is 0000-0000

Directory of Z:\foobar

09/16/2013 11:53 PM 0 foobar.txt

1 File(s) 0 bytes

0 Dir(s) 956,202,299,392 bytes free

Z:\foobar>

That wraps it up for today's post, although I should point out that if you see any errors when you are using the WebDAV Redirector, you should take a look at the Troubleshooting the WebDAV Redirector section of my Using the WebDAV Redirector article; I have done my best to list every error and resolution that I have discovered over the past several years.

Note: This blog was originally posted at http://blogs.msdn.com/robert_mcmurray/

Using Classic ASP and URL Rewrite for Dynamic SEO Functionality

31 December 2012 • by Bob • IIS, URL Rewrite, SEO, Classic ASP

I had another interesting situation present itself recently that I thought would make a good blog: how to use Classic ASP with the IIS URL Rewrite module to dynamically generate Robots.txt and Sitemap.xml files.

Overview

Here's the situation: I host a website for one of my family members, and like everyone else on the Internet, he wanted some better SEO rankings. We discussed a few things that he could do to improve his visibility with search engines, and one of the suggestions that I gave him was to keep his Robots.txt and Sitemap.xml files up-to-date. But there was an additional caveat - he uses two separate DNS names for the same website, and that presents a problem for absolute URLs in either of those files. Before anyone points out that it's usually not a good idea to host multiple DNS names on the same content, there are times when this is acceptable; for example, if you are trying to decide which of several DNS names is the best to use, you might want to bind each name to the same IP address and parse your logs to find out which address is getting the most traffic.

In any event, the syntax for both Robots.txt and Sitemap.xml files is pretty easy, so I wrote a couple of simple Classic ASP Robots.asp and Sitemap.asp pages that output the correct syntax and DNS-specific URLs for each domain name, and I wrote some simple URL Rewrite rules that rewrite inbound requests for Robots.txt and Sitemap.xml files to the ASP pages, while blocking direct access to the Classic ASP pages themselves.

All of that being said, there are a couple of quick things that I would like to mention before I get to the code:

- First of all, I chose Classic ASP for the files because it allows the code to run without having to load any additional framework; I could have used ASP.NET or PHP just as easily, but either of those would require additional overhead that isn't really required.

- Second, the specific website for which I wrote these specific examples consists of all static content that is updated a few times a month, so I wrote the example to parse the physical directory structure for the website's URLs and specified a weekly interval for search engines to revisit the website. All of these options can easily be changed; for example, I reused this code a little while later for a website where all of the content was created dynamically from a database, and I updated the code in the Sitemap.asp file to create the URLs from the dynamically-generated content. (That's really easy to do, but outside the scope of this blog.)

That being said, let's move on to the actual code.

Creating the Required Files

There are three files that you will need to create for this example:

- A Robots.asp file to which URL Rewrite will send requests for Robots.txt

- A Sitemap.asp file to which URL Rewrite will send requests for Sitemap.xml

- A Web.config file that contains the URL Rewrite rules

Step 1 - Creating the Robots.asp File

You need to save the following code sample as Robots.asp in the root of your website; this page will be executed whenever someone requests the Robots.txt file for your website. This example is very simple: it checks for the requested hostname and uses that to dynamically create the absolute URL for the website's Sitemap.xml file.

<%

Option Explicit

On Error Resume Next

Dim strUrlRoot

Dim strHttpHost

Dim strUserAgent

Response.Clear

Response.Buffer = True

Response.ContentType = "text/plain"

Response.CacheControl = "public"

Response.Write "# Robots.txt" & vbCrLf

Response.Write "# For more information on this file see:" & vbCrLf

Response.Write "# http://www.robotstxt.org/" & vbCrLf & vbCrLf

strHttpHost = LCase(Request.ServerVariables("HTTP_HOST"))

strUserAgent = LCase(Request.ServerVariables("HTTP_USER_AGENT"))

strUrlRoot = "http://" & strHttpHost

Response.Write "# Define the sitemap path" & vbCrLf

Response.Write "Sitemap: " & strUrlRoot & "/sitemap.xml" & vbCrLf & vbCrLf

Response.Write "# Make changes for all web spiders" & vbCrLf

Response.Write "User-agent: *" & vbCrLf

Response.Write "Allow: /" & vbCrLf

Response.Write "Disallow: " & vbCrLf

Response.End

%>

Step 2 - Creating the Sitemap.asp File

The following example file is also pretty simple, and you would save this code as Sitemap.asp in the root of your website. There is a section in the code where it loops through the file system looking for files with the *.html file extension and only creates URLs for those files. If you want other files included in your results, or you want to change the code from static to dynamic content, this is where you would need to update the file accordingly.

<%

Option Explicit

On Error Resume Next

Response.Clear

Response.Buffer = True

Response.AddHeader "Connection", "Keep-Alive"

Response.CacheControl = "public"

Dim strFolderArray, lngFolderArray

Dim strUrlRoot, strPhysicalRoot, strFormat

Dim strUrlRelative, strExt

Dim objFSO, objFolder, objFile

strPhysicalRoot = Server.MapPath("/")

Set objFSO = Server.CreateObject("Scripting.Filesystemobject")

strUrlRoot = "http://" & Request.ServerVariables("HTTP_HOST")

' Check for XML or TXT format.

If UCase(Trim(Request("format")))="XML" Then

strFormat = "XML"

Response.ContentType = "text/xml"

Else

strFormat = "TXT"

Response.ContentType = "text/plain"

End If

' Add the UTF-8 Byte Order Mark.

Response.Write Chr(CByte("&hEF"))

Response.Write Chr(CByte("&hBB"))

Response.Write Chr(CByte("&hBF"))

If strFormat = "XML" Then

Response.Write "<?xml version=""1.0"" encoding=""UTF-8""?>" & vbCrLf

Response.Write "<urlset xmlns=""http://www.sitemaps.org/schemas/sitemap/0.9"">" & vbCrLf

End if

' Always output the root of the website.

Call WriteUrl(strUrlRoot,Now,"weekly",strFormat)

' --------------------------------------------------

' This following section contains the logic to parse

' the directory tree and return URLs based on the

' static *.html files that it locates. This is where

' you would change the code for dynamic content.

' --------------------------------------------------

strFolderArray = GetFolderTree(strPhysicalRoot)

For lngFolderArray = 1 to UBound(strFolderArray)

strUrlRelative = Replace(Mid(strFolderArray(lngFolderArray),Len(strPhysicalRoot)+1),"\","/")

Set objFolder = objFSO.GetFolder(Server.MapPath("." & strUrlRelative))

For Each objFile in objFolder.Files

strExt = objFSO.GetExtensionName(objFile.Name)

If StrComp(strExt,"html",vbTextCompare)=0 Then

If StrComp(Left(objFile.Name,6),"google",vbTextCompare)<>0 Then

Call WriteUrl(strUrlRoot & strUrlRelative & "/" & objFile.Name, objFile.DateLastModified, "weekly", strFormat)

End If

End If

Next

Next

' --------------------------------------------------

' End of file system loop.

' --------------------------------------------------

If strFormat = "XML" Then

Response.Write "</urlset>"

End If

Response.End

' ======================================================================

'

' Outputs a sitemap URL to the client in XML or TXT format.

'

' tmpStrFreq = always|hourly|daily|weekly|monthly|yearly|never

' tmpStrFormat = TXT|XML

'

' ======================================================================

Sub WriteUrl(tmpStrUrl,tmpLastModified,tmpStrFreq,tmpStrFormat)

On Error Resume Next

Dim tmpDate : tmpDate = CDate(tmpLastModified)

' Check if the request is for XML or TXT and return the appropriate syntax.

If tmpStrFormat = "XML" Then

Response.Write " <url>" & vbCrLf

Response.Write " <loc>" & Server.HtmlEncode(tmpStrUrl) & "</loc>" & vbCrLf

Response.Write " <lastmod>" & Year(tmpLastModified) & "-" & Right("0" & Month(tmpLastModified),2) & "-" & Right("0" & Day(tmpLastModified),2) & "</lastmod>" & vbCrLf

Response.Write " <changefreq>" & tmpStrFreq & "</changefreq>" & vbCrLf

Response.Write " </url>" & vbCrLf

Else

Response.Write tmpStrUrl & vbCrLf

End If

End Sub

' ======================================================================

'

' Returns a string array of folders under a root path

'

' ======================================================================

Function GetFolderTree(strBaseFolder)

Dim tmpFolderCount,tmpBaseCount

Dim tmpFolders()

Dim tmpFSO,tmpFolder,tmpSubFolder

' Define the initial values for the folder counters.

tmpFolderCount = 1

tmpBaseCount = 0

' Dimension an array to hold the folder names.

ReDim tmpFolders(1)

' Store the root folder in the array.

tmpFolders(tmpFolderCount) = strBaseFolder

' Create file system object.

Set tmpFSO = Server.CreateObject("Scripting.Filesystemobject")

' Loop while we still have folders to process.

While tmpFolderCount <> tmpBaseCount

' Set up a folder object to a base folder.

Set tmpFolder = tmpFSO.GetFolder(tmpFolders(tmpBaseCount+1))

' Loop through the collection of subfolders for the base folder.

For Each tmpSubFolder In tmpFolder.SubFolders

' Increment the folder count.

tmpFolderCount = tmpFolderCount + 1

' Increase the array size

ReDim Preserve tmpFolders(tmpFolderCount)

' Store the folder name in the array.

tmpFolders(tmpFolderCount) = tmpSubFolder.Path

Next

' Increment the base folder counter.

tmpBaseCount = tmpBaseCount + 1

Wend

GetFolderTree = tmpFolders

End Function

%>

Note: There are two helper methods in the preceding example that I should call out:

- The GetFolderTree() function returns a string array of all the folders that are located under a root folder; you could remove that function if you were generating all of your URLs dynamically.

- The WriteUrl() function outputs an entry for the sitemap file in either XML or TXT format, depending on the file type that is in use. It also allows you to specify the frequency that the specific URL should be indexed (always, hourly, daily, weekly, monthly, yearly, or never).

Step 3 - Creating the Web.config File

The last step is to add the URL Rewrite rules to the Web.config file in the root of your website. The following example is a complete Web.config file, but you could merge the rules into your existing Web.config file if you have already created one for your website. These rules are pretty simple, they rewrite all inbound requests for Robots.txt to Robots.asp, and they rewrite all requests for Sitemap.xml to Sitemap.asp?format=XML and requests for Sitemap.txt to Sitemap.asp?format=TXT; this allows requests for both the XML-based and text-based sitemaps to work, even though the Robots.txt file contains the path to the XML file. The last part of the URL Rewrite syntax returns HTTP 404 errors if anyone tries to send direct requests for either the Robots.asp or Sitemap.asp files; this isn't absolutely necesary, but I like to mask what I'm doing from prying eyes. (I'm kind of geeky that way.)

<?xml version="1.0" encoding="UTF-8"?> <configuration> <system.webServer> <rewrite> <rewriteMaps> <clear /> <rewriteMap name="Static URL Rewrites"> <add key="/robots.txt" value="/robots.asp" /> <add key="/sitemap.xml" value="/sitemap.asp?format=XML" /> <add key="/sitemap.txt" value="/sitemap.asp?format=TXT" /> </rewriteMap> <rewriteMap name="Static URL Failures"> <add key="/robots.asp" value="/" /> <add key="/sitemap.asp" value="/" /> </rewriteMap> </rewriteMaps> <rules> <clear /> <rule name="Static URL Rewrites" patternSyntax="ECMAScript" stopProcessing="true"> <match url=".*" ignoreCase="true" negate="false" /> <conditions> <add input="{Static URL Rewrites:{REQUEST_URI}}" pattern="(.+)" /> </conditions> <action type="Rewrite" url="{C:1}" appendQueryString="false" redirectType="Temporary" /> </rule> <rule name="Static URL Failures" patternSyntax="ECMAScript" stopProcessing="true"> <match url=".*" ignoreCase="true" negate="false" /> <conditions> <add input="{Static URL Failures:{REQUEST_URI}}" pattern="(.+)" /> </conditions> <action type="CustomResponse" statusCode="404" subStatusCode="0" /> </rule> <rule name="Prevent rewriting for static files" patternSyntax="Wildcard" stopProcessing="true"> <match url="*" /> <conditions> <add input="{REQUEST_FILENAME}" matchType="IsFile" /> </conditions> <action type="None" /> </rule> </rules> </rewrite> </system.webServer> </configuration>

Summary

That sums it up for this blog; I hope that you get some good ideas from it.

For more information about the syntax in Robots.txt and Sitemap.xml files, see the following URLs:

Note: This blog was originally posted at http://blogs.msdn.com/robert_mcmurray/

Using URL Rewrite with QDIG

28 June 2012 • by Bob • IIS, URL Rewrite

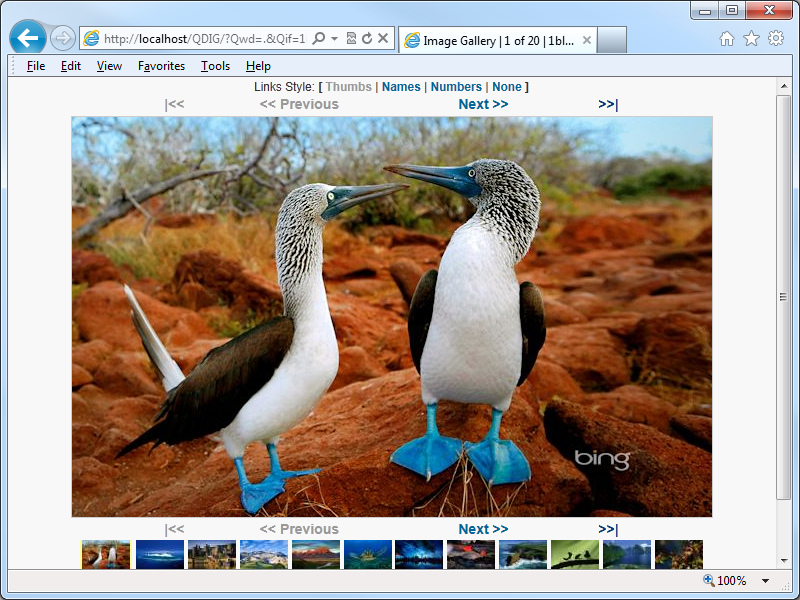

One of the applications that I like to use on my websites it the Quick Digital Image Gallery (QDIG), which is a simple PHP-based image gallery that has just enough features to be really useful without a lot of work on my part to get it working. (Simple is always better - ;-].) Here's a screenshot of QDIG in action with some Bing photos:

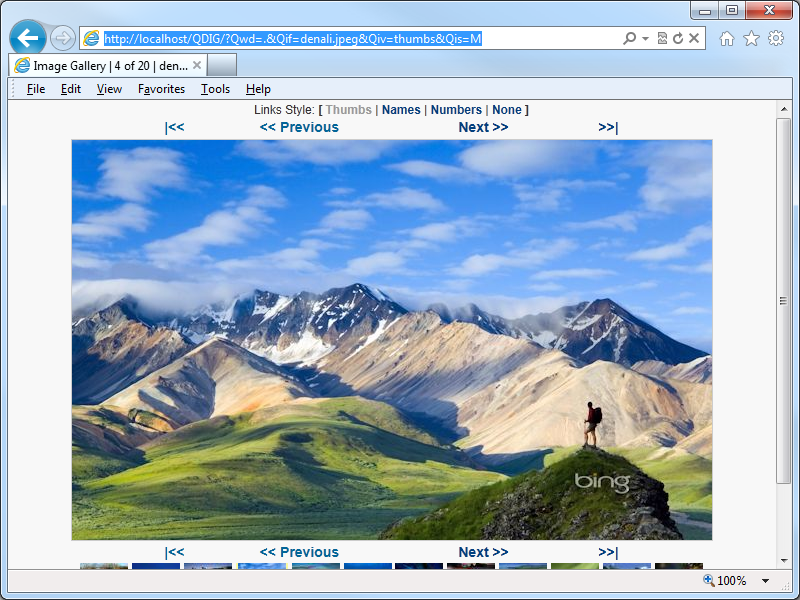

The trouble is, QDIG creates some really heinous query string lines; see the URL line in the following screenshot for an example:

I don't know about you, but in today's SEO-friendly world, I hate long and convoluted query strings. Which brings me to one of my favorite subjects: URL Rewrite for IIS

If you've been around IIS for a while, you probably already know that there are a lot of great things that you can do with the IIS URL Rewrite module, and one of the things that URL Rewrite is great at is cleaning up complex query strings into something that's a little more intuitive.

It would take way to long to describe all of the steps to create the following rules with the URL Rewrite interface, so I'll just include the contents of my web.config file for my QDIG directory - which is a physical folder called "QDIG" that is under the root of my website:

<?xml version="1.0" encoding="UTF-8"?> <configuration> <system.webServer> <rewrite> <rules> <!-- Rewrite the inbound URLs into the correct query string. --> <rule name="RewriteInboundQdigURLs" stopProcessing="true"> <match url="Qif/(.*)/Qiv/(.*)/Qis/(.*)/Qwd/(.*)" /> <conditions> <add input="{REQUEST_FILENAME}" matchType="IsFile" negate="true" /> <add input="{REQUEST_FILENAME}" matchType="IsDirectory" negate="true" /> </conditions> <action type="Rewrite" url="/QDIG/?Qif={R:1}&Qiv={R:2}&Qis={R:3}&Qwd={R:4}" appendQueryString="false" /> </rule> </rules> <outboundRules> <!-- Rewrite the outbound URLs into user-friendly URLs. --> <rule name="RewriteOutboundQdigURLs" preCondition="ResponseIsHTML" enabled="true"> <match filterByTags="A, Img, Link" pattern="^(.*)\?Qwd=([^=&]+)&(?:amp;)?Qif=([^=&]+)&(?:amp;)?Qiv=([^=&]+)&(?:amp;)?Qis=([^=&]+)(.*)" /> <action type="Rewrite" value="/QDIG/Qif/{R:3}/Qiv/{R:4}/Qis/{R:5}/Qwd/{R:2}" /> </rule> <!-- Rewrite the outbound relative QDIG URLs for the correct path. --> <rule name="RewriteOutboundRelativeQdigFileURLs" preCondition="ResponseIsHTML" enabled="true"> <match filterByTags="Img" pattern="^\.\/qdig-files/(.*)$" /> <action type="Rewrite" value="/QDIG/qdig-files/{R:1}" /> </rule> <!-- Rewrite the outbound relative file URLs for the correct path. --> <rule name="RewriteOutboundRelativeFileURLs" preCondition="ResponseIsHTML" enabled="true"> <match filterByTags="Img" pattern="^\.\/(.*)$" /> <action type="Rewrite" value="/QDIG/{R:1}" /> </rule> <preConditions> <!-- Define a precondition so the outbound rules only apply to HTML responses. --> <preCondition name="ResponseIsHTML"> <add input="{RESPONSE_CONTENT_TYPE}" pattern="^text/html" /> </preCondition> </preConditions> </outboundRules> </rewrite> </system.webServer> </configuration>

Here's the breakdown of what all of the rules do:

- RewriteInboundQdigURLs - This rule will rewrite inbound user-friendly URLs into the appropriate query string values that QDIG expects. I should point out that I rearrange the parameters from the way that QDIG would normally define them; more specifically, I pass the value Qwd parameter last, and I do this so that the current directory "." does not get ignored by browsers and break the functionality.

- RewriteOutboundQdigURLs - This rule will rewrite outbound HTML so that all anchor, link, and image tags are in the new format. This is where I actually rearrange the parameters that I mentioned earlier.

- RewriteOutboundRelativeQdigFileURLs - There are several files that QDIG creates in the "/qdig-files/" folder of your application; when the application paths are rewritten, you need to make sure that the those paths won't just break. For example, once you have a path that is rewritten as http://localhost/QDIG/Qif/foo.jpg/Qiv/name/Qis/M/Qwd/, the relative paths will seem to be offset from that URL space as though it were a physical path; since it isn't, you'd get HTTP 404 errors throughout your application.

- RewriteOutboundRelativeFileURLs - This rule is related to the previous rule, although this works for the files in your actual gallery. Since the paths are relative, you need to make sure that they will work in the rewritten URL namespace.

- ResponseIsHTML - This pre-condition verifies if an outbound response is HTML; this is used by the three outbound rules to make sure that URL Rewrite doesn't try to rewrite responses where it's not warranted.

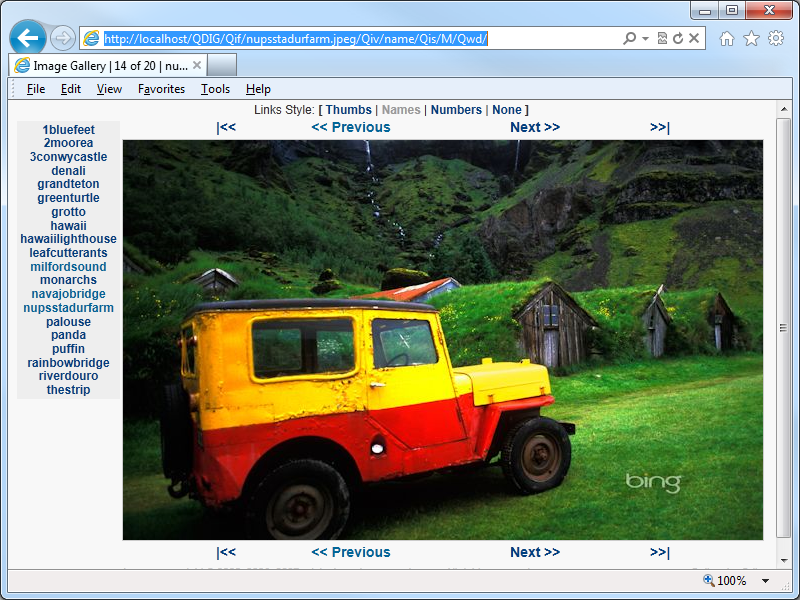

Once you have these rules in place, you get nice user-friendly URLs in QDIG:

I should also point out that these rules also support changing the style from thumbnails to file names to file numbers, etc.

All of that being said, there is one thing that these rules do not support - and that's nested folders under my QDIG application. I don't like to use folders under my QDIG folder - I like to use separate folders with the QDIG file in it, because this makes each gallery self-contained and easily transportable. That being said, after I had written the text for this blog, I tried to use a subfolder under my QDIG application and that didn't work. By looking at what was going on, I'm pretty sure that it would be pretty trivial to write some URL Rewrite rules that would accommodate using subfolders, but that's another project for another day. ;-]

Note: This blog was originally posted at http://blogs.msdn.com/robert_mcmurray/